You Don't Have to Wait for KAIROS

A leaked Claude Code daemon called KAIROS and Karpathy's viral knowledge base blueprint just revealed the future of personal AI. Here's what's actually working today, and how to start building it this weekend.

TL;DR: Last week, Anthropic leaked Claude Code's entire source, revealing KAIROS, an always-on AI daemon that decides when to act on your behalf. Days later, Andrej Karpathy published a blueprint for persistent AI knowledge bases. Together, they're a roadmap for where personal AI is heading. But you don't have to wait for any of it to ship. Here's what's actually working right now, what isn't, and how to start building today.

"What Do You Actually DO With This Thing?"

I taught another second brain class this week. Walked people through the full setup: Obsidian, Google Drive, Claude Code, the terminal. Showed them how the pieces connect. Watched the lightbulbs go on when they realized what was possible.

But there's always one question you run out of time to answer properly. And it's the one that matters most: what do you actually do with it? I'm not talking about the setup or the tools. I'm talking about the mindset. What does it look like when this thing is actually working for you, instead of just being configured and sitting there?

Then, in the same week, two things landed that answered that question better than any class could.

The first was an accident. The second was a tweet. Together, they paint a picture of exactly where personal AI is heading, and why the people building now, even roughly, are going to be ready when it arrives.

What You'll Walk Away With

By the end of this post, you'll have:

- An understanding of two signals from this week that define where personal AI is heading next

- An honest read on what's working with AI agents today and what's still expensive theater

- A three-level framework you can use to start building your own proactive AI system this weekend

- The single most important thing to build first, and why almost everyone gets it backwards

Two Signals That Show Where This Is All Heading

Signal 1: The Claude Code Source Leak

On March 31, Anthropic shipped a routine update to Claude Code via npm, the package manager that millions of developers use every day. But this time, a missing .npmignore file meant they accidentally included a 59.8 MB source map containing the entire unobfuscated source code. 512,000 lines. Roughly 1,900 TypeScript files. 44 hidden feature flags. Everything.

The security implications were real. A malicious axios dependency was briefly bundled in the same window, and Anthropic moved fast to address it. But that's not what made the leak important.

What mattered was the product vision hiding behind those feature flags.

KAIROS. Referenced extensively throughout the codebase, KAIROS is an unreleased system that turns Claude Code into a persistent background daemon. An always-on agent that doesn't wait for you to open your terminal and type a prompt. It operates in the background, decides when to act based on context, and handles sessions autonomously. This isn't a chatbot. This is an AI coworker.

autoDream. While you're idle, sleeping, commuting, living your life, autoDream runs a memory consolidation process. It merges observations from across your sessions, removes logical contradictions, and converts tentative notes into confirmed facts. Think of it as garbage collection for your agent's understanding of you. Every time you come back, it knows you a little better.

ULTRAPLAN. For complex tasks that need serious thinking time, ULTRAPLAN offloads the work to a remote cloud container running Opus, Anthropic's most powerful model, and gives it up to 30 minutes to reason through the problem. You can approve the result from your phone or browser, and a special sentinel brings the output back to your local terminal. Planning that used to require you sitting at your desk babysitting a prompt now happens while you grab coffee.

The shift these features represent is fundamental. We're moving from reactive AI, where you ask and it answers, to proactive AI that works while you're away, surfaces what matters, and takes action on your behalf.

Anthropic isn't building a better chatbot. They're building an operating system for your work.

Signal 2: Karpathy's Knowledge Base Blueprint

Days later, Andrej Karpathy, the former Tesla AI director who coined the term "vibe coding", published a tweet that went viral. His observation was simple and devastating:

"A large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge."

His core insight: every time you ask an LLM a question, it's rediscovering knowledge from scratch. There's no accumulation. No memory. No compounding. You get a brilliant answer, and then it evaporates.

His solution was to stop treating AI as a question-answering machine and start treating it as a knowledge compiler. Raw data goes in: web pages, documents, notes, whatever you're working with. The LLM compiles that into a persistent, structured markdown wiki, complete with summaries, cross-references, and interlinks. Then your queries feed back into the wiki, and the knowledge compounds over time.

From his idea file, published as a GitHub gist designed to be copy-pasted directly into any LLM agent:

"Instead of just retrieving from raw documents at query time, the LLM incrementally builds and maintains a persistent wiki, a structured, interlinked collection of markdown files that sits between you and the raw sources."

He uses Obsidian as the viewing layer. The LLM writes. You read.

If that sounds familiar, it should. It's an AI second brain. A different vocabulary, a slightly different architecture, but fundamentally the same idea I've been writing about for the past year. Obsidian as the substrate. Markdown as the universal format. AI as the engine that reads, writes, and connects. Karpathy just gave the architecture a name and put a viral tweet behind it. The idea was already alive in this corner of the internet, and a lot of us have been building it for a while.

The internet moved fast on his version too. Within days, people had built Claude Code plugins, skill files, and voice-first implementations with Telegram, all based on his architecture. The gist itself reads like a product spec for the knowledge layer that's been missing from most AI agent setups.

The Connection

Here's why these two signals matter together. Karpathy described the knowledge layer, the substrate that gives an AI agent persistent, compounding context about you and your world. The Claude Code leak showed us the agent layer that sits on top of it, the daemon that runs in the background, consolidates memory, and takes action proactively.

Knowledge layer plus agent layer. That's the blueprint for where personal AI is heading.

And you don't have to wait for any of it to ship.

But Wait. Is Any of This Actually Working?

I know how this reads so far. KAIROS! Persistent daemons! Knowledge that compounds! It sounds like the future just arrived and all you need to do is plug in.

But let's be honest for a second, because there's a growing gap between AI demos and AI results, and this week delivered a perfect example of both sides.

On April 4, Anthropic cut off Claude subscription coverage for OpenClaw and other third-party agent frameworks. Thousands of users who'd been running always-on agents on their flat-rate Claude Pro and Max subscriptions woke up to a new reality: API pricing. The cost increase? Up to 50x. Users who were paying $200 a month for Claude Max are now looking at $1,000 to $5,000 in monthly API costs for the same usage patterns.

The reaction was immediate. Forums lit up with people sharing their bills, hundreds of dollars a week on calendar checks and email drafts. "How to cancel Claude subscription" search volume spiked overnight. The dream of a cheap, always-on AI assistant hit a wall called unit economics.

And the skepticism goes deeper than billing. AI agents in 2026 are, as one industry analysis put it, "closer to junior staffers who work quickly, confidently, and often incorrectly, requiring constant review and cleanup." Studies show firms deploying AI tools are reporting new inefficiencies. Duplicated work. Increased oversight burdens. Time spent correcting AI-generated errors. There's a lot of productivity theater happening, with impressive demos and "game changer" tweets, but sustained, compounding value? That's rarer than the hype suggests.

So here's the honest question. Is this stuff actually working for anyone? Or are we all just performing productivity for social media?

I'm not going to pretend I have it all figured out. But I've been building this for months, and I can tell you what's delivering real value and what turned out to be expensive theater. That distinction matters more than any feature flag.

What's Actually Working from the Trenches

I've been building RonOS, a personal AI operating system, for months. It's an Obsidian vault with over 2,000 notes, connected to Claude Code, scheduled tasks, health integrations, and a fleet of tools I've stitched together one experiment at a time. Some of it works beautifully. Some of it was a waste of time. Here's what I've learned.

The Daily Brief Is Level One

This is where most people start, and it's a great starting point. A scheduled task runs every morning. A cron job pulls context from my second brain and delivers a briefing: what's on my calendar, what projects need attention, what I said yesterday that I should follow up on today.

It's useful. It saves me 15 minutes of morning triage. But it's fundamentally reactive. It's a summary of what already exists. It doesn't do work. It doesn't create new connections. It's a report, not an agent.

If this is where you are, you're ahead of most people. But it's not the destination.

The Long-Running Loop Is Level Two

This is where things get real, and it's the pattern I keep coming back to. Instead of one-shot prompts (ask a question, get an answer, move on) you set up recurring loops where your agent does sustained work across days and weeks.

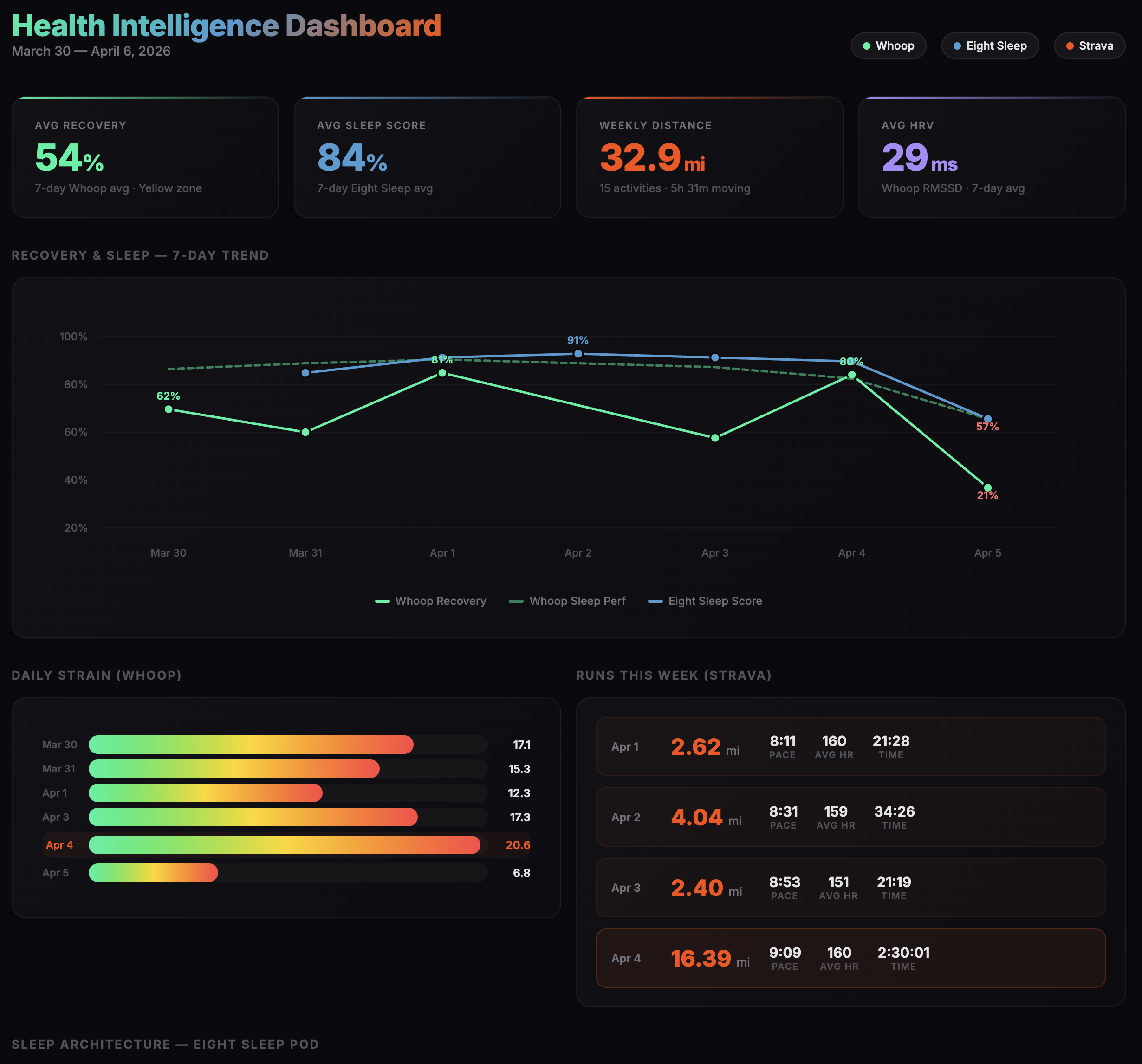

Here's a concrete example. I track my health across three different systems: Whoop for recovery and sleep, Apple Health for activity and vitals, and Strava for workouts. Each of those generates data. None of them talk to each other. And none of them know anything about me beyond the numbers.

So I built a loop. My agent ingests the data, then goes and does proactive research, not just summarizing my stats but searching the web for health insights applicable to my specific situation. It synthesizes what it finds with my actual data and turns it into a plan. "Based on your recovery score and sleep trends this week, here's what I'd adjust. Here are some things we're going to try."

It's a health coach that works on my behalf in the background. While I sleep. While I go about my day. And because it has persistent context in my second brain, it's not starting from scratch each time. It remembers what we tried last week, what worked, what didn't.

This pattern isn't limited to health. Career planning, blog strategy, relationship maintenance, hobby projects, anywhere you have ongoing goals and accumulating context, a long-running loop beats a one-shot prompt every time.

This is the prototype of what KAIROS will automate natively. The difference is that right now, I'm wiring it together with scheduled tasks and existing tools. When KAIROS ships, the plumbing disappears and the pattern just runs.

I'll be transparent though. Not every loop has worked, and I think that's actually okay for a good reason. Take personal finance as an example. I'd love to have an agent that ingests every transaction, synthesizes my spending patterns, and proactively flags issues the way my health loop does. But the platforms securing that data are, by definition, very secure. They're not designed to make it easy for a bot to automatically pull and ingest sensitive financial information without a human in the loop. And honestly, I'm okay with that. That data is too sensitive to automate away. So my finance loop is more hands-on than my health loop, and probably should be. Some loops are going to stay manual for a while, and the trick is knowing which ones.

The Knowledge Layer Is the Foundation Nobody Talks About

This is what Karpathy nailed. Without a persistent knowledge base, your agent rediscovers everything from scratch every session. It doesn't matter how powerful the model is or how clever your prompts are. If your agent has no memory, it has no leverage.

My Obsidian vault is the substrate that makes everything else work. Two thousand notes spanning health data, career goals, project documentation, meeting notes, personal reflections, relationship context. It's the difference between "AI answered my question" and "AI knows my context and acts on it."

When I ask my agent to help me plan my week, it doesn't just look at my calendar. It knows what projects I'm behind on, what health goals I'm tracking, what I told it last week about wanting to prioritize writing. That context is the compound interest of a second brain. And it only works if you're building the knowledge layer consistently, not just when you remember to.

If you take one thing from this post, let it be this: start building the knowledge layer before you build the agent. The agent is only as good as the context it has access to.

Try this: Before you wire up your first scheduled task or agent loop, spend a week just capturing into Obsidian. One note per project, one note per goal, one note per important person in your life. Don't worry about structure. The act of writing it down is the leverage. When you finally point an agent at it, the difference will feel like magic.

The Right Question Isn't "Which Tool?" It's "What Role?"

Right now, there's no single tool that does everything. The people who are getting real value out of AI agents aren't waiting for one to appear. They're stitching together a workflow where each tool plays a specific role.

Here's what mine looks like:

Claude Chat is for thinking on the move. Research, brainstorming, ideation, the kind of work that happens best when you're walking, not sitting at a desk. Voice mode on my phone has become my primary way to capture and develop ideas. In fact, the entire research phase of this blog post happened on an hour-long walk, going back and forth with voice mode in Claude Chat, doing web research and shaping the angles I wanted to explore. By the time I got home, I had everything I needed to hand off to the next tool in the chain.

Cowork is the creative layer where that handoff lands. Writing, editing, drafting, the compositional work that benefits from a focused interface and the ability to iterate on files directly. This entire post was outlined and drafted in Cowork after that walk.

Claude Code is the technical plumbing. Implementation, system changes, cron jobs, publishing to my website. When something needs to be built or deployed, this is where it happens. It's also how this post will go live.

OpenClaw handles life admin. Calendar management, planning around the city, day-to-day tasks. It runs 24/7 on a VPS, always available through Telegram.

Dispatch (via Cowork) gives me portable access to RonOS from anywhere. Context-rich because my full second brain is right there, but dependent on my laptop being on.

The interesting tension is between OpenClaw and Dispatch. OpenClaw is always available but context-poor. It tends to forget to check RonOS, builds up its own separate memory, drifts from my actual state. Dispatch is context-rich but availability-dependent. You can't message it at 2 AM from your phone.

This tradeoff between availability and context is exactly what KAIROS is trying to solve. An agent that's both always-on and deeply contextual. Until that ships, you're managing the tradeoff yourself. And that's okay. The point isn't to have the perfect setup. It's to have a setup that's actually working.

Start Now: The Minimum Viable Agent Loop

You don't need KAIROS. You don't need a full RonOS. You need a starting point and a willingness to iterate. Here are three levels, and you can start with any of them this weekend.

Level 1: The Daily Brief

Set up a scheduled task that pulls your context and delivers a morning summary. This is the "hello world" of personal AI agents. Simple, immediately useful, and it teaches you the fundamentals of how agents access and synthesize your information.

Tools you need: Claude Code and a cron job (or even a simple GitHub Action). If you're on OpenClaw, paste in the daily brief prompt from my last post and you're running in 20 minutes.

Level 2: The Knowledge Layer

Start building the substrate. This is what Karpathy described, and without it, everything else you build will be starting from zero every time.

You don't need 2,000 notes. Start with one domain. Pick the area of your life where you have the most context scattered across the most places (health data, career goals, a project you're managing) and start consolidating it into a simple Obsidian vault. Capture who you are, what you're working on, what matters to you, decisions you're weighing.

This feels less exciting than setting up an agent, but it's the work that makes agents actually useful. Do this first. If you want a deeper walkthrough, I covered the full setup in Building Your AI Second Brain.

Level 3: The Long-Running Loop

Pick one domain and set up a recurring synthesis loop. Not a daily brief (that's a summary). This is an agent that does work. It researches, synthesizes, updates your knowledge base, and surfaces insights on a schedule.

Start with friction, not demos. What actually annoys you every day? What do you wish someone was tracking for you? What information do you keep looking up repeatedly? Build there. The experiments that stick come from solving real daily pain points, not from impressing yourself with what's technically possible.

My health loop started because I was tired of manually cross-referencing three different apps every morning. My blog planning loop started because I kept losing track of what topics I'd researched but hadn't written. Friction first. Impressiveness never.

Try this: Open your phone's notes app and write down every small annoyance you hit this week. The five-minute thing you did three times. The data you copy-pasted between two apps. The question you re-asked Google because you forgot what you found last time. Those are your first agent loops. Pick the one that bugs you the most and start there.

The Future Is Already Here. It's Just Not Shipped Yet.

The Claude Code leak showed us what's behind the feature flags. Karpathy showed us the knowledge architecture. And the OpenClaw billing drama showed us that the economics of always-on agents are still being figured out by everyone, including the companies building the models.

None of this is finished. It's early, it's rough, and it will cost you time and money to figure out what works for your life specifically. That's the honest truth.

But the people who are building now, even imperfectly, even with duct tape and cron jobs, will be ready when KAIROS and its equivalents ship natively. They'll have the knowledge layer built. They'll understand the patterns. They'll know what actually delivers value versus what just looks good in a tweet.

The answer to "what do you actually DO with this thing?" isn't a demo. It's a system that compounds. And you have to build it one loop at a time.

So here's your weekend project. Pick one of the three levels. Set it up. Then tell me what happened. What worked? What broke? What surprised you? I read every reply, and the answers from this community are usually the best part of my week. We're all figuring this out together, and the frontier is better when we're building in public.

Want the experiments I don't post publicly? Every week in The Degenerate, I share the prompts, automations, and lessons from building RonOS in public. The wins, the failures, and the workflows I'm still figuring out. It's free, and it's the best way to keep building this with me.

Get the Obsidian + Claude Code starter vault. Coming soon

The setup I use to run my life — MCP servers, daily briefs, templates. Free when you subscribe to The Degenerate.

You're a Designer Now

I rebuilt ronforbes.com in an afternoon using Claude Design and Claude Desktop. The tools changed. The agency was always yours. Here's what I learned.

The Week the Proactive AI Assistant Went Mainstream

Claude shipped computer use, Dispatch, Channels, and scheduled tasks in eight weeks, replicating what previously required OpenClaw and a self-hosted server. Here's what changed and how to set it up.

Your Second Brain Is Not Optional Anymore

AI didn't create the information overload problem, it made it impossible to ignore. Here's how to build a personal AI second brain using Claude Cowork or Claude Code in a single day, whether you're technical or not.

Stop Learning AI. Start Learning Management.

The most important skill for working with AI agents isn't technical. It's people management. How frameworks from Julie Zhuo and Kim Scott transformed my AI workflows.